Information technology (IT) plays a crucial role in the success of any organization today, and this has been especially true throughout my career in IT leadership. Reflecting on my journey, I’ll admit there were moments when it felt like I was simply figuring things out as I went along. My early IT knowledge was focused mainly on data management and software conversions, leaving me with a steep learning curve in other areas. Thankfully, I had the support of a technology consultant who shared invaluable insights and helped me not only understand technology better but also how to manage an IT department effectively. Later, when I moved to Arizona, I was fortunate to work under a mentor who guided me in refining my leadership abilities and shaping my path to becoming a Chief Information Officer (CIO).

Many nonprofits, small businesses, and community organizations cannot afford a full-time technology leader. Some fully outsource their IT functions, while others rely on external vendors to supplement their internal tech teams. Over the years, CEOs have shared with me their concerns about whether they are truly getting what they need while staying up to date with technology. They are often faced with making decisions to fund solutions that no one within the organization fully understands. In this environment, trust in the systems—amid threats like malware, phishing attacks (emails designed to trick individuals into disclosing sensitive information or opening backdoors to systems), and hackers—often rely more on blind faith or hope than informed decision-making.

A virtual Chief Information Officer (vCIO) can be invaluable for organizations that need expert guidance without the resources of a full-time executive. A vCIO brings the strategic IT knowledge that CEOs often need, helping to align technology initiatives with an organization’s mission and business goals. They offer valuable insights into IT management and strategy, ensuring that technology supports the overall vision of the organization. Moreover, using a vCIO doesn’t require changes to existing IT vendors or systems. Whether on a short-term or long-term basis, our vCIOs can step in to fill gaps, whether that’s helping hire and mentor a new IT Director or CIO or stepping in directly when qualified talent is hard to find. This option can be a more affordable solution compared to hiring full-time executive-level staff. TBD Solutions understands these needs with multiple technology leaders that have over 80 years of combined experience that provide:

Strategic Planning: Developing long-term goals and plans for how technology will be used within the organization.

Technology Budgeting and Cost Containment: Creating and managing the high-quality IT resources to meet organizational needs ensure efficacy and cost-effectiveness.

Risk Mitigation: Evaluating potential risks associated with the organization’s technology use and implementing measures to mitigate them.

Cybersecurity Strategies: Establishing compliance-oriented cybersecurity protocols to protect organization data and systems.

Technology Evaluation: Regularly assessing current technology to ensure it meets the organization’s needs and provides sound return on investment.

Guidance on Technology Trends: Keeping abreast of new technology trends and advising the organization on potential adoptions and innovations.

Creating Efficiencies in Human Capital: Works with business partners to understand needs and put systems in place to create efficiencies.

Mentoring: Providers mentorship to technology staff.

Vendor Management: Manage the relationship and contracts with technology vendors.

Have you ever ordered your favorite pizza through an online app just to be told, “we don’t deliver that far?” I am always disappointed when I can’t get my pizza delivered straight to my door. Just like having a timely pizza delivery, it is important for me to assess service industries present in a neighborhood that I haven’t visited in a long time. I take note of things such as the new restaurants, urgent care centers, and, of course, pizza places.

I often wonder what logistical decisions were made to meet the needs of the customers in the surrounding area. How does a service company, such as a healthcare organization, evaluate if they have reasonably met the needs of a neighborhood to provide the best customer service possible? Delivering the best services can come down to two major overlooked factors, drive time and distance to people served.

Improving Customer Care

If I am running a food delivery company like Grubhub, or I work for a healthcare provider who has to offer in-home nursing services, I need to have a strong understanding of drive time and distance. This insight not only provides understanding of the operational cost, but also allows service providers to gain perspective on the needs of their customers.

Drive time and distance metrics are just one way to measure customer satisfaction and the improvement of customer service. Considering the impact of these two metrics and ensuring people are within a reasonable driving distance to the services are essential. The internet hosts a wealth of resources that helped me tackle a specific client use case, and I would like to share the use case in this blog.

Tackling a Use Case

I was contracted by a client to evaluate drive time and distance for a healthcare provider who offers healthcare services for residents in a 3,000 square mile area. The drive time and distance were measured door to door for a specific type of healthcare service, starting from the patient’s location and going to a provider’s location. The client asked me to determine if there were sufficient provider locations within a reasonable distance and drive time for all residents in those 3,000 square miles.

One of the key requirements in my use case was to create a solution that would sustain current and future needs with little human intervention. Here’s a high-level overview of the various aspects I considered when approaching this client’s request.

Application Programming Interfaces (APIs) were the first thing I considered. If you are not familiar with APIs, they are Interfaces that let you access data from other domains or sites on the internet. WEB and REST are two types of those APIs that allow you to accomplish unattended data extraction over the WEB using HTTP. If you’ve never evaluated map services available online, I assure you that there are tons of information about map services available as well as information on how to use those resources.

Choosing an effective map service was an important consideration of this process. When selecting map services, I found various vendors offering map APIs on the web. Google Maps, with over one billion monthly active users, is a popular choice. However, other options like ArcGIS and Azure Maps also provide APIs to meet drive time and distance requirements. Ultimately, I recommend Microsoft’s Azure Map services due to comparable features and Microsoft’s status as a preferred vendor for many customers.

Acquiring Azure Map services was my ultimate decision forward in this evaluation. I set up a new Azure free account. The free account gave me a $200 free credit for 30 days and additional free services for 12 months.

Azure maps have a “pay as you go” model. The cost is based on the number of transactions per month. I receive thousands of free transactions each month, with subsequent transactions at 50 cents per 1,000, which is quite reasonable compared to other services.

I highly encourage anyone delving into this topic to do more research about the types of APIs, cost, transaction types, number of transactions, and how the number of transactions are computed before committing to a product or a service. Another driving factor for my choice was that Azure Map services offered a route service that collects the exact driving time and distance for any time of the day, even on a future day.

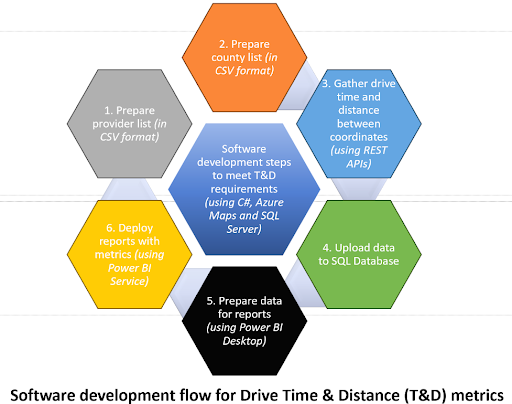

Creating a solution based on my research and available tools was the final step to providing a solution to the client. I used C# as the primary programming language and SQL Server as my database for this solution. I used JSON and CSV as primary data formats. I mapped many 3,000 square mile boundary coordinates to various healthcare provider locations and acquired driving time and distance using Azure Map APIs. Below, you will find a high-level figure that demonstrates how the solution is created. A detailed description of the steps taken is beyond the scope of this blog.

Calculating the drive time and distance is just one way metrics could be intertwined with good customer service. I demonstrated to my client that the healthcare provider strategically positioned an appropriate number of locations across the 3,000 square mile radius. Each customer could access services within an acceptable drive time and distance. By achieving this balance, the provider ensured efficient service delivery and customer satisfaction.

Next time you open a pizza box, you’ll hopefully chew on the idea of how drive time and distance can impact service delivery.

Artificial Intelligence (AI) tools for business are becoming commonplace. AI is poised to be the next big Information Technology (IT) game changer. Considering the possibilities, AI may ultimately have a larger impact than mobile computing and devices like the iPhone. AI, specifically generative AI, is dominating IT advertising and marketing and is generating lots of interest from average technology users due to easy access and low cost of available systems. Here are some practical considerations related to using AI systems safely in your company.

What can you do with generative AI tools today in your organization? Generative AI tools are being used by chatbots to provide simple customer support and access to frequently asked questions. Generative AI can be used to read transcripts of meetings and provide summaries, it can generate content for marketing or email drafting, and it can assist with data entry tasks. Language translation and programming code writing are additional areas where AI can be a competent assistant.

Generative AI tools like ChatGPT or Google’s Bard are a sub-group called Large Language Model, or LLM. A simple explanation of the LLM is a system that can read and understand the context of a question and then formulate an answer based on its database of content from books, articles, and websites accessible via the internet.

The key thing to understand about large language model AI systems is that the programmers will use your questions, also known as prompts, to improve the performance of their models. There should be no expectation of privacy assumed when using these public, cloud-based tools. You and your employees must keep this in mind before any utilization of these AI tools. You can learn the specifics of the tool’s privacy policy by examining the end-user license agreement (EULA).

Employees in your company may already be using AI tools without explicit permission from management. When using company related tools with ChatGPT or other tools, users must be careful not to expose company secrets and confidential data. According to data from Cyberhaven, as of June 2023, 11% of employees have used ChatGPT and 9 percent have pasted company information into ChatGPT. Nearly 5 percent of this data was estimated to be confidential data1 .

There have also been examples of bugs or errors in the system exposing user data to other users. On March 21, 2023 ChatGPT was temporarily shut down to fix a problem that linked prior conversations with the wrong user, potentially exposing confidential data to the incorrect user2. This expectation of privacy was broken in this situation. Do not share information that you would not share in a public forum.

On April 6, 2023, Samsung discovered employees were debugging source code and putting transcripts of meetings with confidential data into ChatGPT. This was only a few weeks after Samsung lifted a ban on ChatGPT. Samsung subsequently enacted procedures to limit the amount of data that could be sent to about 750 words.

Using an LLM AI within your business environment carries risks, however, there are numerous ways to mitigate this risk.

Blanket Ban. Employers can establish policies and implement procedures to prevent employees from accessing these sites or downloading software on company assets.

Access Controls. If your company has implemented robust protocols to limit access to sensitive data, consider expanding these controls to include generative AI systems.

Enterprise License. Companies that choose to use these tools can seek special license agreements that will limit what the vendor can do with the inputs they receive. Companies can have all inputs eliminated from being used for training, or they could only allow the data to be used to improve the model, but only for the company excluding all other parties.

Offline Systems. There are various LLM systems that can be downloaded and run on local computers that do not connect back to the Internet. This option does provide the most protection for your company data, however; it is not a viable option for smaller companies due to the high technical requirements for installation and maintenance.

Sensitive Data. If you are a company that has PCI, HIPAA, or PHI security considerations, you need to be extremely cautious with how you handle inputs into an LLM. You either need to be able to completely scrub the source data to obfuscate PHI or you need to use an offline or private model. Additionally, you should seek a HIPAA Business Associate Agreement with the vendor to protect any PHI.

Employee Awareness. The education of your employees, raising awareness of trade secrets, and protecting sensitive information is key to protecting information from leaking into public view. Companies should consider adding specific language to their existing Acceptable Use Policy and updating employee handbooks to reflect the use of these tools.

AI tools are improving at phenomenal rates. They can artfully develop written and graphical content, but caution should be exercised when using content generated by large language models.

It is possible for systems like ChatGPT to produce output that may not be 100 percent accurate. There is a phenomenon with these systems called AI hallucination. An AI hallucination is when the AI generates incorrect information, but presents it as fact, and might even cite a made-up source3 . This may happen if the model does not understand the prompt, or it does not contain the required information. There are many tactics you can employ to reduce these hallucinations, such as rephrasing the prompts and limiting possible outcomes by framing the prompts.

Always review the output of the LLM, especially if those outputs may be used within your company for other uses. You may want to consider the output of the AI to be a rough draft document, always verify facts being asserted, and rewrite the output in your own voice and style.

Generative AI systems have the potential to be a strong tool within business environments. If your company does not have a policy related to using tools like ChatGPT or Bard, create one and educate your users. It is important that your employees understand how to protect sensitive data, whether it is company secrets or regulated data.

Information Technology (IT) security is a never-ending race to keep pace with defending against what the “Bad Guys” are trying to exploit. Your IT needs to be vigilant for all kinds of threats. Some of the most common include:

Most of us will be familiar with the standard ways to protect your organization from these attacks. The strongest protection will include layers of technology like firewalls, anti-virus solutions, or newer classes of comprehensive endpoint threat analysis. It is wise to include DNS filtering as well as email filter rules in this set of protection tools.

However, just relying on the technology will not completely protect you. Despite the technology available to protect our systems, alone it is not enough to stop all exploits. This is because the “Bad Guys” know how to manipulate human behavior and get people to do things that they might not normally do. Therefore, any mitigation strategy must account for the greatest vulnerability:

The Human Element.

It starts with creating skeptics at your workplace. Train your users to spot these scams and distrust all unsolicited incoming messaging regardless of the method. Scammers will use email (phishing), text messages, voice calls (vishing), even social media for their exploits; no communication method is safe. Here are common traits that could indicate a scam:

While I have been discussing this in the context of your organization, these traits also apply to scams that are directed at individuals in their homes. Your training regimen should make sure the people are using these skills in all aspects of their life, not just at work.

For training, I would recommend using a service. There are many companies out there that offer training for users, including some innovated firms that first evaluate your users and then provide an adapted training based on the results. The best practice in this area is to both evaluate your users regularly and to offer recurring training often. If your organization performs third party security audits (as is required of HIPAA covered entities and companies that must comply with SOC or SOX), you should also ask the vendor to test your users with phishing and/or vishing attempts.

The last thing I want to discuss is how to best protect your firm if, despite all the efforts above, your company becomes a victim of one of these scams.

Number One: Invest in cyber and fraud insurance to protect your company. Most companies are aware of this insurance and have an active policy. However, given the huge rise in ransomware and crypto-locker style attacks, these premiums are rising fast to account for the new threats. It would be wise to review this with your accounting or finance department.

Number Two: Make sure your Disaster Recovery (DR) plan is up to the job. Are your backups immutable, meaning that they cannot change after saving? Having true offline and offsite tape storage is one way to do this. However, this can be done with some backup software and appliances that use AWS or Azure storage for cloud backup. Testing and validating the restoration process must be something that your IT Department practices. This validation goes beyond the simple occasional file recovery that the IT department will manage. Has your IT Team tested their ability to perform a “bare metal” restore of major systems? If not, ask them to!

Number Three: Imbed a Communications Plan in your Disaster Recovery/Business Continuity Plan. It is important to plan how to communicate any breach internally as well as externally. Depending on the industry, there may be disclosure requirements that need to be followed. Engage with legal and communication departments during your planning and testing sessions.

Disaster Recovery and Business Continuity Planning is a subject that your IT Team must be able to articulate to your organization’s leadership, as well as have a planned process that is tested annually for recovering and continuing normal business operations. Ideally, they will have a playbook or some other sort of documented process that they can follow in case of a major incident. While the IT Team cannot account for every type of contingency, you want to minimize the need to problem-solve during the incident.

By approaching IT Security as a collection of systems and understanding how scammers exploit ‘The Human Element’, you can build resilient and recoverable systems to protect your organization.